- This event has passed.

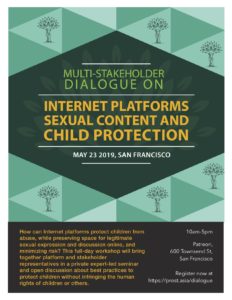

Multi-Stakeholder Dialogue on Internet Platforms, Sexual Content, & Child Protection

May 23, 2019 All day

How can Internet platforms protect children from abuse, while preserving space for legitimate sexual expression and discussion online, and minimizing risk? This full-day workshop will bring together platform and stakeholder representatives in a private expert-led seminar and open discussion about best practices to protect children without infringing the human rights of children or others.

By facilitating a dialogue with experts and stakeholders who are normally excluded from the development of child protection policies by Internet platforms, industry participants will learn how to make these policies more evidence-informed, and more compliant with human rights standards. The result will be improved accuracy in the moderation of sexual content: removing more material that is harmful to children and has no protected expressive value, and less material such as lawful, accurate information on child sexual abuse prevention.

Speakers

Facilitator

Agenda

A nuanced approach to the restriction of sexual content can protect children better, improve user experience, and comply with human rights obligations, while managing risk

Experts in child sexual abuse prevention and those who are affected by sexual content bans share insights from research

Group work on examples of sexual content restriction and how these can become better informed and more inclusive

Participants will identify key lessons into points for inclusion in future jointly-developed best practice recommendations

Quotes

Platforms of all sizes need to be empowered to be made more effective contributors towards child sexual abuse prevention, through a more nuanced and better-informed approach towards content moderation and censorship.

This new sex-positive approach provides another necessary avenue through which we can continue to work together to address, and ultimately prevent, child sexual abuse.

Oftentimes, policies that sound sensible in principle wind up perpetuating the very harms that are sought to be extinguished. Input from varied perspectives is essential to diminishing this risk.

About Prostasia Foundation

Prostasia Foundation is the only s.501(c)(3) nonprofit child protection organization that is progressive and sex-positive, and supports the free and open Internet. We came together in April 2018, one week after the Fight Online Sex Trafficking Act (FOSTA) was signed into law. Our diverse team includes digital rights activists, lawyers, mental health professionals, and representatives of sex workers and other stakeholders who are normally excluded from discussions about child protection.