Recently I watched a video of a naked twelve year old girl—a victim of child sex trafficking—being slapped around by an adult who was attempting to photograph her. In another scene, the child is heard screaming as another man rapes her. I confess that I paid money for this video. But I didn’t transfer Bitcoins to obtain it on some hideous dark web market. I actually rented it for $2.99 on Google Play.

The video was the 1978 film Pretty Baby (74% on Rotten Tomatoes). I was watching it with my colleague Meagan as we were deciding on a film about child sexual abuse for Prostasia Foundation to screen to accompany a discussion about prevention. We ultimately settled on Lolita instead, because it is frankly a better film, and raises more of the questions that we think need to be openly discussed.

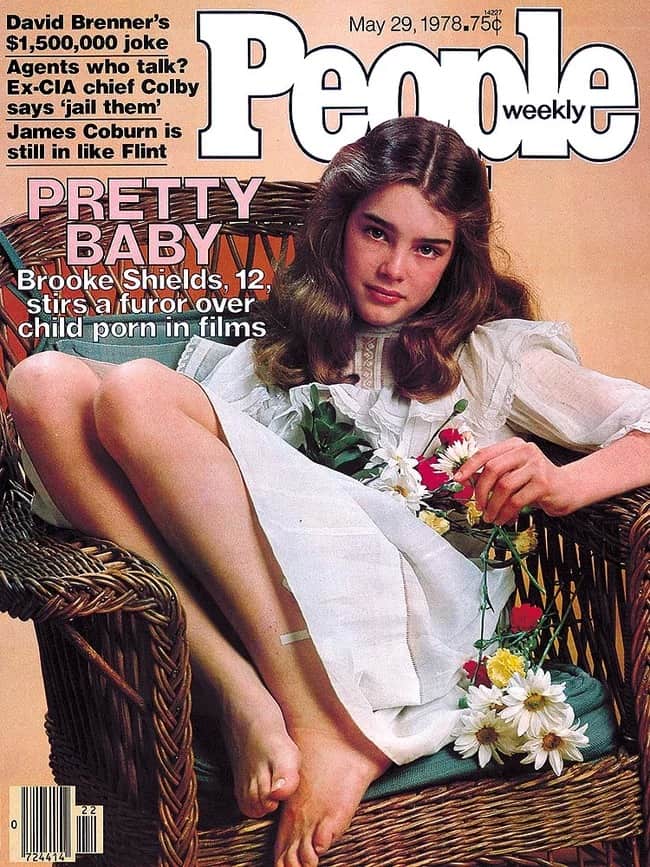

As problematic as the film is by today’s standards, mercifully its child star Brooke Shields today still regards her performance in Pretty Baby as something to be proud of. In the 1970s, her mother and manager Teri Shields sought to protect her daughter from adverse public media attention over the film (“Brooke Shields, 12, stirs a furor over child porn in films,” screams People magazine over her cover shot, while gushing inside, “The skin is flawless; eyes deep blue; lashes black; hair silken. The direct gaze is full of ambivalent sexuality.”)

Today, it would be much more difficult to shield the child star in such a film from exploitation and abuse, regardless of the good intentions of her parents and the filmmaker (as stars like Natalie Portman have found). We live in a different age, in which even innocent YouTube videos of children tend to attract inappropriate sexualized comments from anonymous strangers. The current state of Google’s evolving response to this problem has been to leave such videos up, but to disable people from leaving comments on them of any nature.

One can argue about the merits of this blanket measure as a solution, but it does illustrate two possible responses to the misuse of images of minors, the first being simply to censor them. This is the only appropriate response when the images are so overtly sexual that the law defines them as “child pornography,” because the production or distribution of such images causes (or can safely be assumed to cause) direct harm to the child. (Some jurisdictions recognize a narrow exception for images that are self-produced, and distributed only to a close-in-age dating partner.)

But for depictions of children that are artistic, educational, or otherwise simply innocent, outright censorship may not be the right tool for the problem. A child’s body is not inherently obscene, and images of it are not inherently abusive. However the way in which they are used can easily be abusive—for example they can be used to sexually harass, groom, or humiliate a child, and we have a responsibility as a society to protect them from such harms. So the second and generally better response to the misuse of such images is to address that problem—their misuse—directly.

To the extent that Internet companies have a role in fulfilling that responsibility, banning users who leave inappropriate comments on images of real children, or who share these images in a sexual context in private groups, are just two examples of sensible and proportionate steps that can be taken. Parents can be mindful of how much of their children’s lives are shared on social media. Artists can tag their work appropriately. And educators can play their part by helping young students understand how sexual selfies can be misused, and why it is wrong to share others’ selfies without consent.

One standard for Hollywood, another for fanart

Another contentious grey area is the depiction of children that are either enacted by adult actors or models, or are drawn by hand or computer. The distribution of such images cannot directly harm the child depicted in them, because that child does not exist. We explained this in the comments we submitted to the United Nations Committee on the Rights of the Child last month, opposing a proposal that would extend the international legal definition of child pornography to include art, fiction, and performances that depict minors sexually.

On its face, the UN proposal would require a wide range of mainstream content to be banned—not merely 40 year old films like Pretty Baby, but also current Hollywood productions. For example, the third episode of the 2019 Hulu network series PEN15 contains extended scenes of a 13 year old masturbating, including one shot of her hands sticky with vaginal lubrication. (The actress playing the role was 30 years old.) Big Mouth is another series that depicts minors sexually, in the format of an animated comedy about puberty.

But while these depictions may be seen as “legitimate” because they are from mainstream Hollywood studios, the same leeway is not offered to the much larger body of mostly non-commercial art and fiction produced by independent artists and fans. If Google decides to stop selling Pretty Baby, one film might go temporarily out of print—no great loss. But when a large platform like Tumblr changes its rules to prohibit sexual content in an effort to address child sexual abuse, this affects thousands of individual creators, and the communities they have built up around them. This disproportionately affects those who are not so well represented in mainstream media, such as LGBTQ+ people.

Real child sexual exploitation material must be eliminated from the Internet and from society. But that term (CSEM, defined in law as child pornography) has until recently had a very specific meaning, and it has been tied closely to harm to an actual child. When we begin to extend this definition to include art depicting an imaginary child, there is no such harm to the subject depicted. But the harms to innocent members of the community can be great.

The lack of a real child victim makes the argument for censoring artistic depictions much weaker, and ties it to the assumption that these depictions may indirectly contribute towards a culture that is more tolerant of child sexual abuse. But as we pointed out to the United Nations, there is no evidence to support that assumption, and some evidence pointing in the opposite direction, suggesting that such depictions may reduce rates of sexual offending against minors. In either case, we think that further research on this question is urgently required.

Even if the censors’ assumption is true—that depicting child nudity or sexuality may send a false message about childrens’ accessibility as sexual objects for adults—this doesn’t amount to an argument for blanket censorship. Instead, it provides all the more reason to engage with and criticize that reading of the work, reasserting that in real life, children cannot consent to sex with adults, and that depictions of real children being sexually abused or exploited are inherently harmful and unacceptable.

Even in a society where many other works are censored—China—the open availability of the book Lolita has recently generated a frank and open discussion. In his afterword to the novel, Nabokov denied that there was a moral to the story. Yet without his art to stimulate such discussion, such issues will frequently be avoided, because they are taboo. By censoring art that addresses the sexuality and abuse of minors, we only strengthen and sustain that taboo, and chill discussion about child sexual abuse and its prevention.

“But pedophiles”

Even before she appeared in Pretty Baby, Brooke Shields posed for an infamous nude portrait that appeared in a Playboy publication, Sugar & Spice. She was 10 years old. Although Terri Shields had originally consented to the photoshoot, she subsequently sued the photographer for continuing to distribute the portrait, which she claimed was damaging her daughter’s reputation. The judge in that case ruled that the photographs from the shoot “have no erotic appeal except to possibly perverse minds.” One of those photographs went on to be reappropriated by artist Richard Prince under the title Spiritual America, and was exhibited at the Guggenheim in New York.

There are many uncomfortable aspects to this saga, not least of which is the judge’s acknowledgement that “perverse minds” might well take erotic pleasure from this photograph of a real child. More uncomfortable still is the knowledge that there isn’t really anything that we can do about that. There will always be people who find prepubescent minors sexually attractive—which is essentially the medical definition of pedophilia—and the current scientific consensus is that there is no cure for that condition. Therefore, there will always be depictions of children that are artistic or innocent in intent, but that do appeal to those with pedophilic sexual interests.

Many of the laws and policies that we make to censor such depictions seem to be motivated not by the desire to curb abusive or harmful conduct, but because we think that it is morally wrong even to view such depictions and to locate eroticism in them—which is why Spiritual America, with its overtly erotic framing, is such an uncomfortable work to view. This is, surely, the unspoken motivation behind the bans on young-looking sex dolls that are currently poised to pass into law in three states, since there is no evidence that owning such dolls leads to the abuse of real children.

Earlier this month, anti-pedophile activists picketed a nudist family swim on the grounds that despite screening for registered sex offenders, the organizers could not guarantee that some pedophiles might not be present—which is perfectly true; since most pedophiles (as far as we know) never do sexually offend against a child. The protesters went on to complain that the children attending “will be scarred for life” by being seen in the nude by someone who finds them sexually attractive. Is this a valid concern, or a sex-negative projection?

In last week’s Savage Love column, sex columnist Dan Savage spoke with pedophilia expert Michael Seto about a reader who found an acquaintance looking at photos of young boys on Instagram. Dan wrote, “while it may not be pleasant to contemplate what might be going through a pedophile’s mind when they look at innocent images of children, it’s not against the law for someone with a sexual interest in children to dink around on Instagram.”

If preventing harm to children is really our goal, then eliminating abusive behavior that causes such harm has to be our priority. Eliminating the sexual attraction that motivates that behavior is secondary, largely because scientists don’t believe it to be possible.

As tough as it is to swallow, Dan is right. Preventing pedophiles from getting off is not the goal of child pornography law, nor is that goal even achievable. As loathsome as it is to think about a pedophile getting off to an image of a child, we could never censor everything that they might find arousing. If, instead, preventing harm to children is really our goal, then eliminating abusive behavior that causes such harm has to be our priority. Eliminating the sexual attraction that motivates that behavior is secondary, largely because scientists don’t believe it to be possible.

To accept this fact is not “defending” or “normalizing” pedophilia or treating it as “acceptable” (nor, as conservative slippery slope arguments inevitably continue, that it should be defined in law as a sexual orientation or be added to the LGBTQ+ spectrum).

Among pedophiles, two-thirds polled by the peer support community Virtuous Pedophiles, claimed they would take a pill to eliminate their condition. While no such pill exists, experts believe that the sexual attraction itself doesn’t have to lead to abuse. Therefore as a broader community, we have an opportunity and a responsibility to prevent people who would engage in image-based sexual offenses against children from doing so. One of the ways that we can do that is by offering them a pathway away from offending, and towards sources of support. For example, Prostasia Foundation is collaborating with a Swedish project called Prevent It, which aims to help change the behavior of those who resort to seeking out illegal images of minors on the dark web.

Like other support websites and forums, Virtuous Pedophiles also bans users from sharing pictures of children—even innocent ones—because in that specific context, it would be inappropriate. In the context of a pedophilia support group, limiting access to photographs of children is a sensible and proportionate rule, and Internet platforms knowingly hosting such forums would be within their rights to enforce such a rule. The same applies when it comes to WhatsApp and Facebook groups used for sharing photographs of children in a sexual context.

A flexible standard rather than blanket rules

This is really just an illustration of a broader point: when it comes to depictions of child nudity or sexuality in art and fiction, we need to act carefully and to recognize that context matters. This means that it’s impossible to deal with the harmful use of such depictions using blanket rules, whether those are imposed by the government and enforced by the police, or imposed by private Internet companies and enforced by algorithms.

The alternative to a blanket rule is a flexible standard, and in this case flexibility is actually strength. For example, laws that are flexible allow us to punish (severely) an adult for distributing sexual pictures of a child, but without placing equally severe penalties on sexually active teenagers who exchange intimate photographs of each other.

The terms of service of a social media company are flexible when they allow nudity (even child nudity, in appropriate cases) to be depicted in a journalistic or artistic content, even though they disallow it in a sexual context. We are advocating for Facebook and Instagram to make their nudity standards more flexible in exactly this way, and that’s why we’ve joined the National Coalition Against Censorship’s #WeTheNipple campaign.

We want to help Internet companies do a better job at moderating the sexual content that they allow on their platforms, by encouraging them not to adopt blanket rules that treat sexual speech as inherently harmful, but instead adopting flexible standards that consider the context in which that speech is presented and used. In this way, they can both prevent apparently innocent images from being misused in an abusive context, while also avoiding censoring more explicit imagery used in legitimate contexts such as sex education.

That’s why we’re holding an event this May to help bring them together with experts and stakeholders to address a range of difficult grey areas that straddle the line between innocence and exploitation when it comes to child protection and sexual content. For example, should Pretty Baby be available on Google Play? Should search engines be linking to nudist photography websites that focus on children? What safeguards should social media sites take to prevent harm to children who pose for photos in swimwear or underwear? Should pedophiles or registered sex offenders be permitted online, and if so are there limits about what they should be permitted to talk about?

We don’t have the answers to all of these questions. But neither does any other single stakeholder. Ensuring child safety is much more than just a responsibility for governments. It’s also much more than just the responsibility of Internet companies. It’s a shared community responsibility—and that’s why representatives from across a broad range of sectors have to be involved in fulfilling it. We look forward to engaging with them in a nuanced discussion of these difficult questions on May 23, and sharing the results with you for your feedback.

“The protesters went on to complain that the children attending “will be scarred for life” by being seen in the nude by someone who finds them sexually attractive. Is this a valid concern, or a sex-negative projection?” Of course, that’s invalid, as it would require that those children have mind-reading powers. As for a “cure”… God, I feel like we’re talking about vampirism. You know, in many ways, I feel a sense of camaraderie with vampires, and not just because of loli vamps (at least ones who enjoy their condition, not the annoying self-loathing emos).

This article definitely pushes things in the right direction. I still think it’s not the correct direction entirely… considering where the bar is set however, even suggesting as much common sense as here is a daring start.

It’s mind boggling that in the year 2019 we have to explain to the world that you cannot persecute content creators for the art they create, and thought crime is unacceptable no matter what the excuse! To a “millennial” like myself, it is ridiculous as it is horrifying… it’s like I’m experiencing a tour back to medieval societies 300 years ago, or at most the 1930’s when the Nazis were burning paintings. But to some this is actually the way they think and how they see the world! I’ve accepted that those people are from another reality than the rest of us… the problem is, some of them still write the laws of the society we all live in, and many are desperate to enforce their long outdated views on everyone else.

If teen pornography is harmless to the point that we should legalize it for teens, then looking at teen pornography (self-made, non-violent) should be decriminalized in general. The focus should shift to exploitation (catfishing, revenge porn, sextortion) and away from a blanket prohibition that misunderstands both men and teenagers.

Let me repeat: if the production and dissemination of teen pornography is generally natural, harmless, and unavoidable, if the policing of this material causes more harm than good, then advocating the absolute prohibition of possession and viewing of this material by men simply because they are men, in the absence of definable and specific harm such as sextortion, violence, catfishing, is nothing more than hatred of men forced to subsist in a hated social category.

Claiming an absolute moral distinction between the two forms of consumption of teen pornography is absurd, impossible, and indicates that your organization resides fully within the prohibition regime that is grounded in hatred of pedophiles, just as the Jews were hated under National Socialism.

All your organizational jargon about protecting civil rights is really about how we can all continue to hate pedophiles absolutely, continue to force them under an inescapable mass surveillance regime, pretending to respect their human rights while actually defending the rights of non-pedophiles who get caught up in the violent cyclone of mass hysteria, mass surveillance, mass censorship.

Good luck getting Hitler to sign this armistice.

You’re on the wrong side of history.

Good intentions from the filmmaker? The film basically portrayed child prostitution in a positive light. Especially in the scene where she’s raped by a john. She doesn’t seem to be traumatised at all. The movie fails to show how bad child prostitution is and that’s why it’s pedophile apologist garbage.

You might be interested to hear an opposing perspective from feminist podcaster and author Jamie Loftus in this episode of her Lolita podcast. She is hardly (in your words) a “pedophile apologist”, but she compares this movie favorably to Lolita because it was directed by a woman and tells the story from the girl’s viewpoint, rather than from the man’s. This doesn’t make the imagery less problematic, but I can’t agree with you that it presents child prostitution in a neutral or positive light.

[…] Shield’s early career is largely defined by her naked body. Starting with Pretty Baby in 1978 when Shield’s was 12 and continuing with The Blue Lagoon, and Endless Love, Shields has sparked a […]