“Knowing there are videos and pictures of my abuse and of me sharing nude images as a minor is embarrassing, to say the least. I constantly feel stupid that I did it. I think it was definitely a mistake and I have added a lot to child sexual abuse material (CSAM) being spread. And I feel like an idiot for that. I really, really do. Fueling addictions, creating a wider market, and making the whole problem worse. I think about it a lot in shame. I wish I could stop younger me from posting and sharing those videos and pictures. As for the pictures and videos my abuser had on his phone, I assume he shared them, but maybe it was just to look at later? I don’t know, to be honest. And I don’t want to know. I wish I could just forget about all of it, but it’s life, my life and I have to suffer consequences, deal with the hand I was dealt, and enjoy the pockets of joy I find in life.”

– Sasha Sloan, CSAM victim

The harm of child sexual abuse material

It is often said that survivors of child sexual abuse (CSA) are revictimized every time a photo or video of their abuse is shared or viewed. However, this is an understatement. In nearly all cases, victims have no way of knowing when this content is viewed or shared. To someone whose abuse has been recorded and posted online, a single person viewing the content once is indistinguishable from, and therefore just as harmful as, ten thousand people viewing it a dozen times each. A more accurate statement is that a victim/survivor is continuously revictimized for every moment images or videos of their abuse continue to exist. With this in mind, it is evident that the best way to support survivors is to ensure the quick and thorough removal of such content while preventing people from viewing it. Unfortunately, the current approach taken by lawmakers, tech companies, and even anti-trafficking organizations consistently fails to do this. The focus on incarcerating people who view illegal content is failing the victims it is meant to protect and causing the prevalence of such content to increase.

It’s a really scary thing to know that people have seen you in such a vulnerable position. Cameras still cause me anxiety to this day, and during my more paranoid moments I’ve ended up checking my bathroom and bedroom for hidden cameras. It’s a huge fear of mine.

Venus, CSAM victim

Defining CSAM

In the digital age, misinformation about child sexual abuse is more widespread than ever, and misusing related terms is becoming an increasingly popular method of attacking political opponents, undermining prevention efforts, and inciting fear and bigotry against minorities. Therefore, clearly defining terminology is a vital part of any discussion about child protection and child sexual abuse prevention. The term “child sexual abuse material” serves as a replacement for “child pornography,” as “pornography” implies that consent was given, which is not possible for sexual content involving minors. CSAM can be defined as “images or videos depicting the real-life sexual abuse of a minor.” Importantly, this does not include fictional depictions of CSA or non-abusive sexual content, such as age-appropriate teen sexting. CSAM is not a legal term, and “child pornography” is generally used in discussions regarding laws related to CSAM.

Failures of a carceral approach

At first glance, a carceral approach to preventing CSAM seems logical. After all, those who produce, distribute, and view such content are undeniably causing harm. If those people are arrested and incarcerated, they are less likely to continue doing that. However, a closer look reveals numerous flaws, some of them arguably more harmful than taking no action to eliminate CSAM. Nowhere is this better demonstrated than in the case of the Childs Play CSAM website, which was operated by Australian police for 11 months in 2016-2017 as part of a sting operation. During this period, the number of posts containing one or more CSAM images on the site more than doubled, from 4500 to over 12,000. Some of these images were posted by law enforcement officials to maintain their covers once they had gained control of the website.

They might argue in the long term [keeping the website up] will be beneficial to my daughter because it will help them capture other [offenders]. But just sending her image to one offender can turn into it being in the hands of hundreds or thousands of others, hurting her more, not helping her.

Mother of a child whose abuse images were shared on Childs Play

The case of Childs Play may be extreme, but it is not unique. Distributing CSAM under the guise of “protecting children” seems to be an increasingly common tactic for law enforcement. In their 2017 reporting on the Childs Play sting operation, Norwegian news outlet, Verdens Gang, found that various law enforcement agencies had operated four of the eight largest known CSAM websites on the dark web for a combined total of over 18 months. Among these was Playpen, which the FBI ran for two weeks in 2015 to distribute tracking malware to the devices of individuals accessing the website. The tactic was condemned as unconstitutional by the Electronic Frontier Foundation and even led to the dismissal of evidence against an alleged CSAM viewer in at least one of the resulting trials. In both the Childs Play and Playpen cases, as well as several others, law enforcement’s continued operation of these websites led to thousands of CSAM images being viewed, and potentially downloaded or shared, hundreds of thousands of times, increasing the reach of harmful content and making it harder to remove from the internet.

Recently, corporations and governments have used the existence of CSAM to justify attempts to infringe on people’s privacy. Some may remember Apple’s plan to search every image on users’ devices without their consent, which experts warned could be modified by malicious actors to identify and silence activists, target minorities, and spy on a large portion of the population. In January 2022, the UK government launched its No Place to Hide campaign, which used inflated statistics and manipulative tactics to generate public pushback to Meta’s plan to implement end-to-end encryption for DMs on Facebook and Instagram. In response, experts pointed out that encryption is vital to ensuring internet users’ safety and protecting children.

Prosecutorial overreach

Due to its focus on maximizing legal action, our carceral approach to CSAM tends to ignore the actual impact of the content that is being criminalized, resulting in further harm to innocent individuals, such as teenagers who consensually exchange nude images with other teens. These interactions are considered harmless by child psychology experts, but most child pornography laws allow teenagers to be prosecuted for distribution if they share nudes of themselves with peers. If convicted, many regions require these teenagers to register as sex offenders, leading to lost job opportunities, an inability to find housing, and even violence at the hands of vigilantes. Minors on the registry are twice as likely to have been victims of sexual assault within the past year and four times more likely to have recently attempted suicide when compared to their non-registered peers.

The prosecution of teens who engage in harmless sexual activities also introduces a risk of misconduct by prosecutors and law enforcement officers. In a particularly egregious case, police attempted to enact a warrant requiring a 17-year-old boy to receive an erection-inducing injection so investigators could take pictures of his genitals as evidence in a case where he was charged with distributing child pornography for sexting with his girlfriend. It was reported that police had already taken nude photographs of the boy when arresting him, suggesting that such practices may be routine in these cases. The incident left many questioning why such a warrant was granted in the first place and whether similar warrants had been issued in other cases involving teenagers who shared nude images of themselves. Additional concerns emerged when, a year later, the lead detective in the case was accused of having inappropriate relations with two boys on a youth hockey team he coached.

[The police are] using a statute that was designed to protect children from being exploited in a sexual manner to take a picture of this young man in a sexually explicit manner. The irony is incredible.

Court-appointed guardian of a teenager whose genitals police photographed during a child pornography investigation

In addition to teen sexting, prosecutorial overreach is a significant problem in cases involving other forms of non-abusive content. There is a growing movement to expand the scope of child pornography laws beyond what is necessary to protect children. Under these proposed laws, harmless outlets and content such as sex dolls, drawings, and even text-based stories could be banned if they were determined to depict fictional child abuse.

Even non-sexual images of children, such as the ones parents often take to document events and milestones, could be classified as child pornography if prosecutors believe the person possessing the photograph has it for sexual purposes. One Arizona couple experienced the harms of this firsthand when police determined that innocent photos of their children in the bath were evidence that the kids were at risk of being sexually abused. The ensuing legal battle lasted ten years, during which the children were placed into protective custody and separated from their parents and siblings, likely resulting in severe emotional distress. In other cases, police have filed charges and even obtained convictions against individuals who produce, distribute, or possess fictional depictions of abuse, despite no real children being harmed in its production or distribution.

Targeting sex workers, artists, and CSA survivors

Emboldened by law enforcement agencies’ success in broadening the scope of child pornography laws, supporters of the “war on porn” have adopted similar strategies. Many of the organizations behind this movement brand themselves as anti-trafficking groups. However, their actions often extend far beyond this scope, and they frequently conflate legal and non-abusive content with child sexual abuse material. Some even claim that pornography is a root cause of trafficking and CSA, though they consistently fail to provide evidence beyond anecdotes and fallacious comparisons. By exploiting the public’s desire to fight abuse, these groups pushed policies that harm sex workers, CSA survivors, and children while doing little to combat trafficking.

The manipulative and deceitful tactics used by these organizations are not accidental. Their behavior is similar to the denial and cover-ups that often characterize evangelical groups’ responses to allegations of child abuse within their ranks. Unsurprisingly, many “anti-trafficking” organizations share leadership with and receive donations from evangelical groups and other proponents of purity culture. In return, evangelical groups receive a platform to redirect the public’s attention away from the culture and power structure that enables abuse within religious organizations.

Though they have done little to address real CSAM, these strategies have been disturbingly effective at encouraging attacks against marginalized groups and stigmatized communities. Efforts to expand the definition of CSAM to include non-abusive content partially facilitated the rise of the anti-shipping movement, a cult-like community dedicated to harassing those who produce or consume art and other fictional content involving “immoral” relationships.

Anti-shippers regularly submit false CSAM reports against fictional content, clogging up moderation queues and impeding the removal of actual CSAM. Anti-trafficking groups may similarly be at fault for the decreased reliability of legitimate CSAM detection efforts. Religious organizations have also pushed tech and social media companies to adopt unnecessarily restrictive content policies that have since been used to silence sex workers and censor reporting on child sexual abuse, with no impact on the prevalence of CSAM.

Limited options for viewers

When it comes to individuals who view CSAM, there is a substantial disparity between public perceptions and existing research. For example, although less than 10% of child pornography offenders return to viewing illegal material after being convicted, a sample of the general public predicted that this value would be 74%, over seven times the real percentage. The public also significantly overestimated the rate at which CSAM viewers directly abuse children, predicting that 63% of viewers would go on to have sexual contact with a child, 21 times the actual value of 3%. These misperceptions drove support for punitive measures, such as incarceration and sex offender registration, and opposition to non-carceral alternatives. In other words, some of the support for our carceral system results from misconceptions about the risks posed by child pornography offenders.

I didn’t look for professional help because I thought I would get arrested straight away. Plus I had no money to afford it and I don’t think online counseling was even a thing back then.

Alex, former CSAM viewer

Regardless of its origins, the desire to punish people who view CSAM is understandable. After all, their actions directly contribute to the revictimization of a CSA survivor. Nevertheless, like many other elements of a carceral approach, punitive measures may increase the rate at which people view and share CSAM. According to a survey of dark web CSAM viewers, 62% of those searching for illegal content involving children tried to stop viewing such content at least once. However, the same study found that only 3% of viewers received help to stop, while 33% wanted to seek help but were afraid to do so or unable to find support. In many regions, mandatory reporting laws make it impossible for CSAM viewers to disclose their actions to a mental health professional without being reported to law enforcement. Faced with the threat of imprisonment and registration as a sex offender, many CSAM viewers who want to stop choose not to seek support, likely causing them to continue viewing harmful content.

Elements of a non-carceral approach

Over the past couple of years, experts have begun to develop new strategies for preventing CSAM viewing, addressing many of the shortcomings of current carceral methods. At its core, this new approach prioritizes support for CSAM victims over costly and ineffective punishments for CSAM viewers. A critical component of this shift is replacing incarceration with support options to help offenders stop viewing illegal content. Therapy-based support options, such as Prevent it, an experimental online cognitive behavioral therapy program being researched by the Karolinska Institutet in Sweden, are one form of support being explored.

While supporting people who view CSAM instead of imprisoning them is controversial, early statistics demonstrate the benefits of such an approach. After just six months of operation, ReDirection, a self-help program for CSAM viewers, had been visited nearly 9,000 times. Assuming each view was a distinct user, this is almost nine times the number of people sentenced on federal child pornography charges in the United States during a 12-month period. (The actual number of Redirection users may be lower, as one person could have accounted for several views, but it is still likely several times the number of people arrested.) Of those who chose to provide feedback, 60% said their CSAM use had decreased or stopped due to the program. These numbers will only increase as ReDirection and similar programs become more effective and well-known. If similar programs were to be implemented and widely advertised, rates of CSAM viewing would likely drop significantly, reducing the need for law enforcement operations that keep abusive content online for months after its detection.

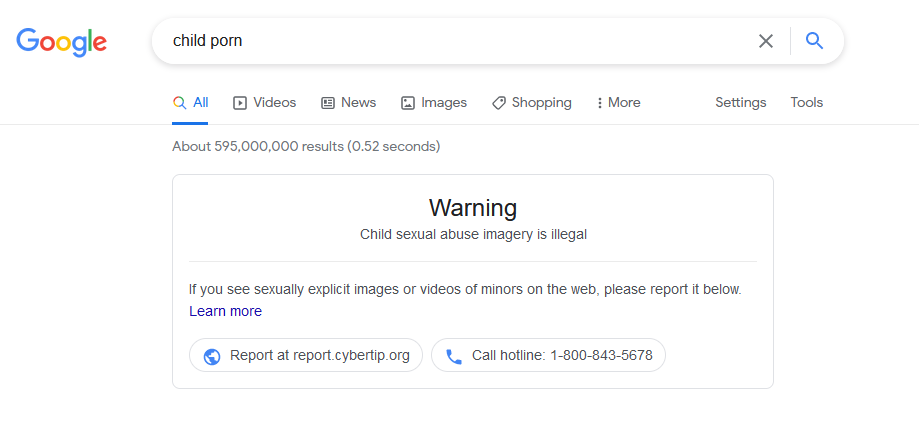

Deterrence

Of course, the best way to help someone stop viewing illegal content is to discourage them from seeking it out in the first place. Initiatives pursuing this goal are known as deterrence programs, which have emerged as a simple-yet-effective method for search engines, social media platforms, and child protection organizations to fight the spread of CSAM online. Though the concept is relatively broad, deterrence programs usually take the form of a warning shown when a user attempts to search for a word or phrase associated with CSAM. These warnings often include instructions on reporting illegal content or resources for anyone struggling to stop viewing such content. Some platforms also disable certain features for content involving minors or filter such content out of search results on particular topics. Though it is difficult to determine the impact of these measures, the Prostasia Foundation’s CSAM Deterrence campaign has directed nearly 200,000 people to support and resources in just four years of operation.

The effectiveness of deterrence programs depends on their ability to grab the attention of people searching for illegal content. Therefore, research on how people start seeking and viewing CSAM is immensely beneficial to the organizations running these programs. For this reason, the survey of dark web CSAM viewers discussed earlier contained several questions about respondents’ early use of CSAM. Shockingly, the results indicated that 70% of CSAM viewers were first exposed to illegal content when they were under age 18, with nearly 40% being younger than 13. Though the survey did not directly ask why respondents started viewing CSAM, several minor respondents mistakenly believed that it was acceptable for them to view sexual content involving their peers. The finding illustrates the importance of educating people about the harmful effects of CSAM and ensuring that minors who view illegal content can receive help to stop.

If I had received proper education on the harms of CSAM and not been misled to think my addiction was inevitable, I never would’ve started [viewing CSAM] in the first place.

Erela, former CSAM addict

Reporting and removal

While analyzing the data regarding the age at which people first view CSAM, researchers speculated on other possible reasons people may begin viewing illegal content. Among the possibilities mentioned was that CSA survivors may search out such content in pursuit of closure or to find and report instances of CSAM depicting them. The latter case is particularly feasible, as there are survivor groups formed for that very purpose. The logistics of platform moderation are beyond the scope of this article, but simple measures, such as discouraging people from falsely reporting fictional content, would increase the speed and accuracy of responses to legitimate reports. In turn, this would reduce the overall prevalence of CSAM and decrease the need for victims to seek out and repeatedly report images and videos depicting their abuse.

I had repressed memories of my abuse for years, and when they resurfaced I kept doubting my memories and myself since I was only 3 years old at the time of the abuse. I wanted proof that I had actually been abused, so I went searching for [CSAM].

Venus, CSA survivor and former CSAM viewer

According to the same survey, over half of CSAM viewers first encounter abusive content involving children accidentally. Unfortunately, many people have little knowledge of how to respond when exposed to such content. Encountering CSAM can be frightening or traumatizing, especially for those who are sensitive to topics related to child abuse, and many people’s instinct is to navigate away as quickly as possible without telling anyone what happened. This reaction is understandable, especially since many regions have no legal protection for people who encounter child pornography by accident or with the intent to report it. In the long term, a non-carceral approach should include changes to legislation that make it easier for child pornography offenders to get help to stop without the risk of being imprisoned. Because the focus would no longer be on arresting people who view illegal content, individuals who stumble across CSAM would feel safer reporting it, increasing the likelihood that it gets taken down.

Our current approach to child sexual abuse material is failing. The system designed to identify and eliminate this harmful content is instead allowing it to spread further. Innocent people are being caught in the crosshairs, while malicious organizations take advantage of the situation to further their agendas. A change is needed, and the research is clear: support works where punishment is falling short. No child should have to live with the knowledge that images and videos of their abuse exist online for the world to see, but until we relinquish our desire for revenge and embrace prevention, survivors will continue to bear this terrible burden.

Author’s Note

Thank you to everyone who took the time to share their experience regarding CSAM with me for this article. If you have encountered CSAM, MAP Resources has a step-by-step Guide to Reporting CSAM that also contains information on seeking support for any mental health issues you experience. The Prostasia Foundation’s Get Help page has additional resources for those in need of support.

A previous version of this article contained a link to the ReDirection self-help program. This was removed due to privacy concerns following the publication of a misleading research paper by the group behind the program.

Context matters in this situation just like every other. There’s a lot of varying circumstances around which someone may be in possession of CSAM the most relevant of which being the circumstances surrounding its creation.

For instance, On one extreme you have those who legitimately commission for the production of CSAM. Thereby tangibly and materially causing further acts of child sexual abuse. I must say that I every strongly lean towards a carceral approach for such individuals.

On the opposite end of the spectrum, you have teen couples that exchange nudes with one another. Or simply teens that have sexual nudes of themselves (there was a rather famous case of a teen being charged as an adult for nude photos of himself) I could not be more opposed to incarceration or really any punitive measures for that matter in these context’s.

(there was a rather famous case of a teen being charged as an adult for nude photos of himself) I could not be more opposed to incarceration or really any punitive measures for that matter in these context’s.

Not forgetting (grand)parents who have innocent bathtub photos.

After a recent publication by the team behind ReDirection, which is filled with stigmatizing language and misinformation (they didn’t even get the definition of pedophilia correct), I can no longer recommend that people struggling with illegal content engage with the ReDirection self-help program. Considering their willingness to promote harmful stigma and misinformation, I’d consider doing so a privacy risk.

might be worth it to edit the post and add a note at the bottom.

oh good point, didn’t want to edit for transparency reasons but a note would get around that