No Internet company or website operator wants to allow its platform to be used to distribute child sexual abuse material (CSAM). But especially for smaller operators, it has been difficult to access the resources needed to filter out this material quickly and automatically. In 2019, only a dozen of the largest tech companies were responsible for 99% of the abuse images reported to NCMEC (the federal government’s appointed clearinghouse for CSAM reporting). In the past year, this has begun to change as new systems have been brought online to bring CSAM filtering to a broader base of Internet platforms.

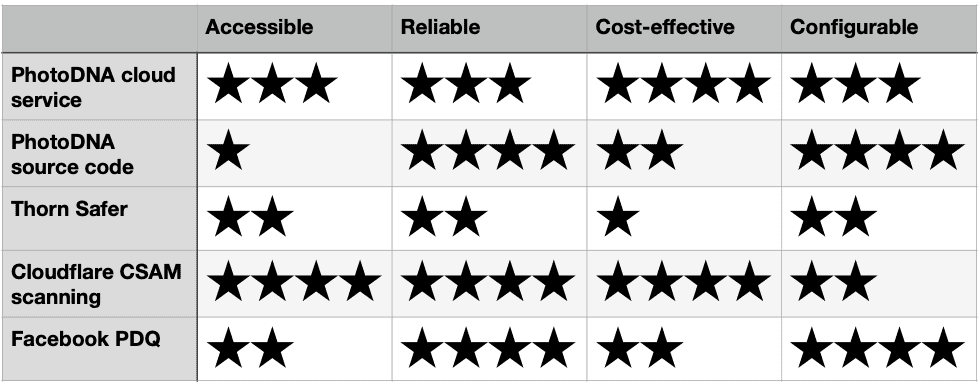

In this article we compare five CSAM-scanning solutions, two of which Prostasia Foundation has implemented on our own systems. We share our opinions on how accessible they are to a website of any size, how reliable they are at detecting abuse images accurately, how cost-effective each solution is to implement, and how configurable it is. Since none of these technologies are intended to be visible to a website’s users, this article is targeted mostly at website administrators and other technically knowledgeable readers.

Here’s how our analysis boils down:

PhotoDNA

The first two options are both variations of Microsoft’s proprietary PhotoDNA algorithm. As we have previously explained, PhotoDNA is a method of comparing image hashes—mathematically-derived representations of images. By scanning uploaded images against a database of hashes of known CSAM, any images that match can be automatically blocked or quarantined, and a report made to NCMEC.

What made PhotoDNA unique when it was first released in 2009 was that it allowed “fuzzy” matches for hashed images; in other words, if an image is mathematically similar to one that has already been identified by a hash, it can still be shown as a match. This is intended to defeat most attempts to disguise CSAM images by altering them slightly in an attempt to evade hash-based filtering.

But that’s only one piece of the puzzle that allows websites to scan for CSAM: the other piece is a databases of hashes of CSAM that has previously been identified. Since the original content cannot be derived from its hash, it is lawful to store image hashes of CSAM images for use in scanning newly-uploaded images for comparison against the hash. Therefore NCMEC maintains several databases of image hashes that are made available to major participating platforms. Its principal CyberTipline database is composed of images that NCMEC has assessed as being CSAM, and has reported to law enforcement.

Because access to the original hash databases is considered sensitive, NCMEC will not provide these to smaller platforms. Neither will Microsoft provide the source code of its PhotoDNA algorithm except to its most trusted partners, because if the algorithm became widely known, it is thought that this might enable abusers to bypass it. The secrecy of these two elements of the system explains why two separate PhotoDNA products are listed in our product review.

The PhotoDNA cloud service is the variant that most Internet platforms can gain access to, as a web service provided through Microsoft’s Azure platform. This hides the algorithm itself, and the image hashes databases, behind an API (application programming interface), so that rather than scanning the images on its own system, a website operator must upload them to Microsoft for scanning. The PhotoDNA cloud system will report back to the website whether there is a match.

The main limitations on the PhotoDNA cloud service are inherent in the distributed nature of this system: it slows scanning down because of the necessity for images to be sent to Microsoft’s server and scanned. This also reduces the privacy of the system; although Microsoft undertakes not to keep copies of images, there is the possibility that images sent for scanning might be intercepted.

The cloud service does however have an experimental “edge match” API that allows hashes to be generated on the client-side, and those hashes sent to the Microsoft server in place of the original images. This offers better privacy protection as against a solution that involves sending original images. (Somewhat confusingly, these hashes are not PhotoDNA hashes, but an intermediate hash format, so that the confidentiality of the PhotoDNA hashing algorithm can still be preserved.)

Compared with the cloud service, using the PhotoDNA algorithm and hash databases directly would allow a large platform to scan images more quickly, and to configure how they are scanned in ways that the cloud service doesn’t permit—most notably, by configuring the level of “fuzziness” that is allowed in a match.

The cloud service doesn’t permit this to be adjusted, and from our testing, it appears that either the level of allowed fuzziness is set very low, or that the algorithm doesn’t function very well with the low-resolution test images that Microsoft supplies. We deduce this from the fact that almost any of the deformations that we attempted during our implementation of the system for MAP Support Club—including format shifting, cropping, padding, rotating, or watermarking the test images—resulted in a failed match. Oddly, these are exactly the sort of deformations that PhotoDNA is meant to be resilient against.

Despite this limitation, Microsoft does allow other configurations to be made by cloud service users. It allows the operator to enable image hash databases from any one or more of the following sources: Canadian industry, U.S. industry, Cybertip.ca, and NCMEC. The severity of abuse images included can also be selected: either sex acts only, or sex acts and “lascivious exhibition” (erotic posing), showing either pre-pubescent or pubescent child victims. There is no particular reason why most platforms would not want to enable scanning for images in all of these categories.

Due to the inability to configure the fuzziness of hash matching, we rated the PhotoDNA cloud service as being both less reliable and less configurable than a system that uses the PhotoDNA algorithm directly. However we rated the PhotoDNA cloud service as being more accessible and cost-effective, because of the convenient API interface that Microsoft provides to access it. Once approved, access to the system is also free of charge. In comparison, as noted above, access to the original PhotoDNA algorithm is tightly controlled, and the costs of integrating it with a website’s internal systems would be considerably higher.

Thorn Safer

Due to the prevalence of newly self-created images, including sexting images taken and uploaded by teens themselves, scanning for existing CSAM hashes is not a complete solution to prevent CSAM images from appearing online. Thus under heavy pressure from governments to “do more,” the larger platforms have also recently been rolling out AI tools to detect new suspected CSAM images.

Google released its AI tool called Content Safety API in 2018, making it available to other groups on application. Prostasia Foundation applied for access to this tool, but Google declined our request saying that “we believe the Content Safety API may not be a good match for your needs.” The first generally-available commercial product that claims to be able to detect new CSAM images using machine learning is Thorn’s Safer. Although we haven’t been given access to Safer either, we have chosen to make a tentative assessment of it on the basis of publicly available information.

Safer is a hybrid product which combines hash-based scanning with machine learning classification algorithms. Details of how this technology has been trained and how accurately it detects CSAM are sparse, but we do know that Safer’s machine learning has been trained on adult pornography, and that Safer users can contribute directly to the system’s shared image database, without these additions being evaluated by trained assessors.

We also know in general terms that machine learning algorithms for image recognition tend to be both flawed overall, and biased against minorities specifically. In October 2020, it was reported that Facebook’s nudity-detection AI reported a picture of onions for takedown. It may be that for largest platforms, AI algorithms can assist human moderators to triage likely-infringing images. But they should never be relied upon without human review, and for smaller platforms they are likely to be more trouble than they are worth.

Although Thorn claims that Safer can detect new CSAM images with greater than 99% accuracy, we have strong reason to be skeptical of this claim in the absence of an independent public evaluation of its effectiveness. We perhaps wouldn’t be so skeptical if Thorn hadn’t played fast and loose with statistics in the past. As at the date of this article, it continues to claim that it used its AI technology to identity over 5,500 child sex trafficking victims in a single year—yet the most accurate sex trafficking statistics that we have reveal this claim to be of dubious accuracy.

In order to properly evaluate the accuracy of Safer’s AI subsystem, we contacted Thorn twice to request more information about their product. Because we received no response to these repeated enquiries, Thorn lost a star for the accessibility of its product, and we have also given it a conservative rating for how accurate it is, knowing some of its limitations. As a cloud-hosted product, it is rated equally as configurable as the other products of this type. We will update these ratings once we are able to learn more about the product.

Another factor on which Thorn scored poorly for Safer was affordability. That’s because although it received a multi-million dollar grant to help develop Safer as a “solution” to child sexual abuse online, it is charging a hefty fee to end users. This ranges from $26,688 all the way up to $118,125 per year in licensing fees, depending on how many images are to be scanned. This takes Safer way outside of the realm of affordability for many smaller web platforms. These considerations combine to leave Safer with our lowest overall rating.

Cloudflare

In our inaugural Hall of Fame this year, we explained why the introduction of Cloudflare’s CSAM Scanning Tool in December 2019 was so revolutionary. To be effective, CSAM scanning must include the broadest possible range of websites, not just those of the tech giants. Less than 1% of illegal child abuse content is found on social media platforms, according to the Internet Watch Foundation (the IWF; another Hall of Fame inductee). Therefore whatever Facebook or Twitter could do to curb the problem would not be nearly significant enough.

But what Cloudflare could do is significant. If enough of its members turned on this one simple setting, about 14.5% of all websites could protect themselves and their users from participating in the spread of known CSAM, without any cost, and without the need for any programming knowledge at all. Much of the CSAM that is posted to the open web by users is found on “chan-style” imageboard websites, whose operators typically don’t have the technical skills to implement PhotoDNA scanning, nor the money to license Safer… but they are often Cloudflare users.

Cloudflare’s CSAM Scanning Tool is by far the simplest tool for this purpose, that is accessible to any website. On the other hand, at the cost of this simplicity comes some lack of control. Compared with the licensed Microsoft PhotoDNA cloud service, Cloudflare allows only one setting to be configured: whether the “NCMEC NGO” and/or the “NCMEC industry” hash lists are to be consulted. The severity of the images is not configurable—although we don’t regard this as a major flaw—and neither is the degree of fuzziness.

Another difficulty we encountered is that we haven’t been able to test the Cloudflare CSAM scanner, as it does not come with any test images, and the PhotoDNA cloud service test images do not trigger a match. However we can at least confirm that with both hash lists selected, the filter doesn’t appear to be obviously overbroad. We have tested it with a variety of legal images, none of which triggered a match. While we still have major concerns about NCMEC’s transparency, these hash lists appear to be as described.

For websites that already use Cloudflare, there is little reason not to turn this feature on, as we have at Prostasia Foundation. We particularly recommend that you should do so if you allow users to post content to your website, and if you don’t have the resources to implement one of the other solutions. Cloudflare’s CSAM Scanning Tool receives our top overall rating.

Facebook PDQ

Perhaps the least well known of the CSAM scanning solutions that are available for public implementation is Facebook’s PDQ algorithm. Facebook released the source code of this product in August 2019 as its own in-house alternative to PhotoDNA. Released at the same time was a related algorithm, TMK+PDQF, which is used for hash matching of video content—a capability that isn’t included in any of the other solutions reviewed here.

Microsoft’s choice to limit the distribution of its PhotoDNA code is an approach of “security through obscurity,” which is one reason why a detailed analysis of how it works is not available. Because Facebook’s PDQ code has been released as free and open source software, it does not suffer from this same limitation. This allows programmers and agencies from around the world to pick it over, find bugs, and potentially to improve it.

The code was reviewed by computer scientists from Monash University and the Australian Federal Police in an article that was published in December 2019. The authors of that paper found:

It is a robust and well performing algorithm in the uses for which it was designed—format, resolution and compression changes result in near perfect performance, closely followed by ‘light-touch’ alterations such as text. Heavier, “adversarial” changes (as described by the project authors) such as the addition of a title screen, watermarking and cropping beyond marginal levels result in rapid performance drops.

What this means, in short, is that it’s a decent alternative to PhotoDNA—but that it can still be overcome. Since our testing revealed similar shortcomings in PhotoDNA (at least using the cloud service version and the supplied test images), we have rated the products as mostly equivalent… but with the advantage that PDQ is more accessible, since it is freely available to anyone who wishes to use it.

One downside of PDQ, particularly when compared with Cloudflare’s solution, is that it will require some custom implementation. Facebook only provides the bare bones of a PDQ-based solution. A bigger downside is that Facebook’s solution doesn’t come with access to a database of image hashes, such as those maintained by NCMEC. Therefore, unless you are fortunate enough to have access to a PDQ-compatible database of CSAM image hashes (as far as we know, none are publicly available), you can’t use PDQ to scan for known CSAM, and could only use it as a supplementary filter for images that you want to block locally.

Conclusion

None of the solutions presented here is a panacea. Despite the popular notion that Internet companies have it within their control to stop child sexual abuse online, they don’t. In fact, the entire notion that censorship and criminalization will be sufficient to eliminate CSAM is unfounded: that approach has failed. Removing CSAM from the web, without addressing the root causes that cause people to upload and share those images in the first place, is just fighting fires. Experts agree: it’s long past time for governments, child safety groups, and industry alike to abandon their myopic focus on censorship, and to embrace a comprehensive, primary prevention approach.

With all that said, when it’s your website that’s on fire, fire-fighting is still needed. For too long however, access to CSAM scanning technology has been closely guarded by a cartel of government-approved censorship service providers, and by the largest tech companies who are given privileged access to these tools. This mistaken attempt at “security through obscurity” has meant that some of the websites that are least able to eliminate CSAM through manual content moderation, also have the least access to technical tools that could help automate this process.

Over the last year, this has begun to change, with the release of several new technical tools for CSAM filtering aimed at platforms of different sizes and with different needs. The most promising of these, and our overall recommendation, is Cloudflare’s CSAM Scanning Tool, due to its simplicity of operation. Cloudflare could do more to encourage its members to use the tool, and we would also like to see its next release being made more configurable. In a solid second place, we also recommend Microsoft’s PhotoDNA cloud service, although implementing it is a slightly more involved process for the website administrator.

Are you a website operator who has attempted to implement CSAM scanning, or has plans to do so? We would be interested to hear about your experiences in the comments.

If you have encountered illegal content involving minors online, you can learn how to report it here.

We are not going to rehabilitate our way out of this issue either anytime soon. Look, as much as I hate to say it, we need very powerful filters. The amount of traffic towards CSAM darkweb sites have exploded. We are not doing nearly enough to crush these degenerates. We need to invest in ways that would utilize PhotoDNA in such a way as to make it very difficult for someone to access known CSAM for main browsers whether we are talking Chrome, Firefox or TOR.

Maybe best case scenario without PhotoDNA censorship deployed to the main browsers, we could reduce traffic by maybe 70% if we invest heavily in both prevention and semi-prevention programs that would make it easier for MAPs to get help so they don’t act on their demented sickness. But this isn’t a complete solution.

The unfortunate reality is that there are always going to be crooks. So prevention and in combination with even semi-prevention like Stoptinow and Priotab will only go so far. (I consider them semi-prevention since they mostly appear to focus on csam possession offenders aka individuals who have already violated law but keep them from continuing or escalating)

A powerful filter if done right could render detected CSAM unviewable. We don’t know yet, but it’s worth really considering.

Imagine if this software can be installed on every single piece of consumer computer. Imagine if it has the ability to completely destroy any image it detects to match identified CSAM. Imagine if this was on every Browser from TOR to Chrome. It would make it profoundly difficult to access known CSAM.

The next step is to be able to disrupt livestreams in real time. Right now, AI is weak, but in 30 years, it will be very powerful. Perhaps powerful enough to detect prepubescent bodies engaging in activities that are illegal to be recorded and would disrupt such live-streams in real time. Such an AI installed on an end users computer might be able to prevent him or her from ever being able to view any image, whether historic or live that is illegal. We will make it impossible for anyone to view real csam online. Ideally, it should be made so difficult that it would actually be easier to commit premeditated terrorism than it would be to view even a single image involving the sexual abuse of a child.

Ultimately, we may never know if these technologies will ever be used in this way, or if they could ever be used in this way without evil pedoscum hacking into them to attempt to circumvent it. Or whether there is a way to safeguard it from such scum. But we ought to really think outside the box with regards to stamping out this problem. Maybe only some of the technilogical solutions mentioned above will be possible. Maybe and hopefully all of them. But the bottom line is CSAM censorship will continue to play a significant role.

Part II

The Final Solution:

Enlightenment Eugenics through the principles of the enlightenment and respect of individual rights

In the future, it will be possible to customize a perfect baby. Perhaps in this new world, we can customize a baby to be free of addiction of all kinds, including freedom from the capability of becoming addicted to alcohol, texting, social media, pornography, anything you can think of, and of course, CSAM. We will have greater control over AOA (age of attraction) to ensure they all will grow up to be attracted to adults. 18-30 year olds. We can make sure that our designer babies are born with the right genetics and other important biological markers so they grow up to be ethical citizens. There is a fundamental reason why some go out to rape children and why others recoil in horror at the idea. Biology has a lot to do with it. Some grow up in horrible households and never commit these crimes while we have people born in luxury that still commit these atrocities.

Once the age of Enlightenment Eugenics comes, homophobia, transphobia, racism, sadism, hypersexuality, pedophilia, hebophilia, nepiophilia and other degenerate states of the mind to be cured once and for all. We shall ensure all babies are born FREE from such horrific mental states. And we shall deliver the cure to all the living who are cursed with such states. A great new world can emerge out of this, a world of eternal glory, if we can keep it.

Aside from the serious privacy concerns that would come from building any such software into browsers or operating systems, those who want to access such material will work around it. They will create their own browser that uses the tor network if it was necessary, they will create their own operating systems, don’t underestimate their determination and ability.

Of course the bigger issue is that this would completely destroy privacy, and provide a backdoor into every single computer. This would allow for the complete and total tracking of every single persons computer use.

This kind of scanning tool also likely wouldn’t address these people effectively, it will only work on sites where images are posted directly. I’d imagine the kind of people that would use Tor likely aren’t just posting pictures on a forum, they’re almost certainly uploading everything in encrypted .rar or other such files. This kind of scanning tool only addresses the tip of the iceberg.

You actually just said that?

Let’s not shall we, really not an okay way of thinking and definitely not the right place to be saying it.

He’s either shitposting, or else telling us who he really is. Either way, this post was reported but I’m leaving it up so people can make up their own minds.

There will unfortunately still be a small group of dedicated criminals. But aggressive censorship will make it much more difficult.

It doesn’t have to necessarily include surveillance, would it? I’m mostly thinking if there is a way to autocensor images if the user tried downloading an illegal file (through image cache or affirmatively) or censor images the criminal tries to transfer from old hardrive to new one that would have the censor program. The censor program installed on a person’s operating system does not necessarily need to send any info to anyone.

On the topic of browser censoring, I believe Hany Farid when asked about whether it would report on an end user who comes across illegal stuff it censors, he essentially said it wouldn’t.

The way I see it, if none of your information is sent away, it is simply censorship, not a privacy violation. Ultimately we will want to make sure these censorship tools do not get used by the government, whether it is the CIA or FBI to start surveillance. I believe the 4th Amendment of the US constitution could prevent it. We just want a censorship tool while preserving privacy.

Yes, this is unfortunate. And this would limit the effectiveness of browser side censoring. It still will be effective against regular image files though. But I do wonder if operating side censorship might do the trick. The people downloading into encrypted folders will once on their computers choose to open them up. Any file they take out of .rar could be destroyed when operating side censors detect it.

The goal is to make it very very difficult to view, access, save, or distribute this stuff.

Once again, I’m not a computer expert, I just came across the idea by Hany Farid of browser side censorship which will not use any surveillance and wonder if we can somehow apply it to operating side censorship.

Yes, because I believe this could eliminate almost all crime, especially crimes related to sex crimes against children.

Well, when it becomes possible to engineer healthier babies, there is no reason not to. We want healthy, functioning and law abiding citizens who do not commit sexual violence nor non-contact sex crimes of any kind. We want to take humanity to the next stage of evolution by placing it in the hands of every consenting couple of parents. Virtually all parents will opt for designer babies on some level. They may not want to customize the height or hair color, but they definitely will want to make sure their baby does not grow up with severe mental disorders like having pedophilic disorder.

Because it is such a heavily stigmatized crime, even parents who aggressively oppose the concept of designer babies will be begging the state to subsidize engineering that would ensure their baby will grow up free from pedophilic disorder, sadism, low functioning phychopathy or hypersexual disorders. Just as Down Syndrome has almost been eliminated in Iceland due to abortion of Down Syndrome preborns, similar concepts of selective breeding could be applied to other disorders of the brain of preborns, but instead of abortions, perhaps biological engineering of some kind.

From my perspective, if it’s safe and possible to do designer babies in such a way to eliminate crime-prone states of mind such as pedophilic disorder, why should it be simply voluntary. Perhaps a liberal or non-aggression principle based argument could be formulated to make such engineering compulsory. I myself am a libertarian, I voted for the Libertarian Party and I am very open to support such compulsory policies.

My prediction is that by 2100, there will be no more pedophiles or nepiophiles, addictions of all kinds including social media addiction, pornography addictions, food addictions and CSAM addictions will be non-existent thanks to humanity taking the next stage of evolution through the process of Liberal Eugenics.

Designer babies in combination with effective cures to living people born with these disorders will render pedophiles extinct. There is no need to kill pedophiles to achieve this end. A pedophile born in 1980 could be cured in 2050 and live to 2120, spending 70 years free of such horrific disorders. A pedophile who takes such initiatives should be celebrated as contributing to the next step of human evolution and should be thanked for contributing to the extinction of pedophilia.

We can achieve the extinction of the pedophile without committing crimes against humanity and without harming nor killing a single pedophile. I use the term “Final Solution” because this really is the final solution to the problem and could stamp it out once and for all. There is no need to violate the non aggression principle to achieve these ends. A new glorious society can be born out of this.

As an evolutionary biologist, I’ll just comment that the poster’s comments about CRISPRing away items such as homophobia, and sexual dispositions that vary in twin studies, are not possible, since they go beyond what is directly heritable. In any case, genes often have multiple functions and the ‘interactome’ engaged by trying to edit them would be full of hidden pitfalls.